If you haven’t used Notion yet, I’m sure you’ve heard of it and how its a great alternative to other note taking/organising apps such as Evernote and Microsoft One Note. And if you have tried Notion, like me, you were probably a little lost at first and may have quickly turned back to your previous, familiar solution.

But hopefully you’re willing to give it a proper try.

See, Notion is like this giant forest which has many many ways of navigating through it — and all of them are correct. It’s just a matter of finding which one suits you best.

Unlike it’s counterparts which simply give you a hierarchy of folders and files (or Notebooks and Notes), Notion requires you to put in some work to really find the best structure for your particular data.

It took me a while to get my head around it. It was worth the effort.

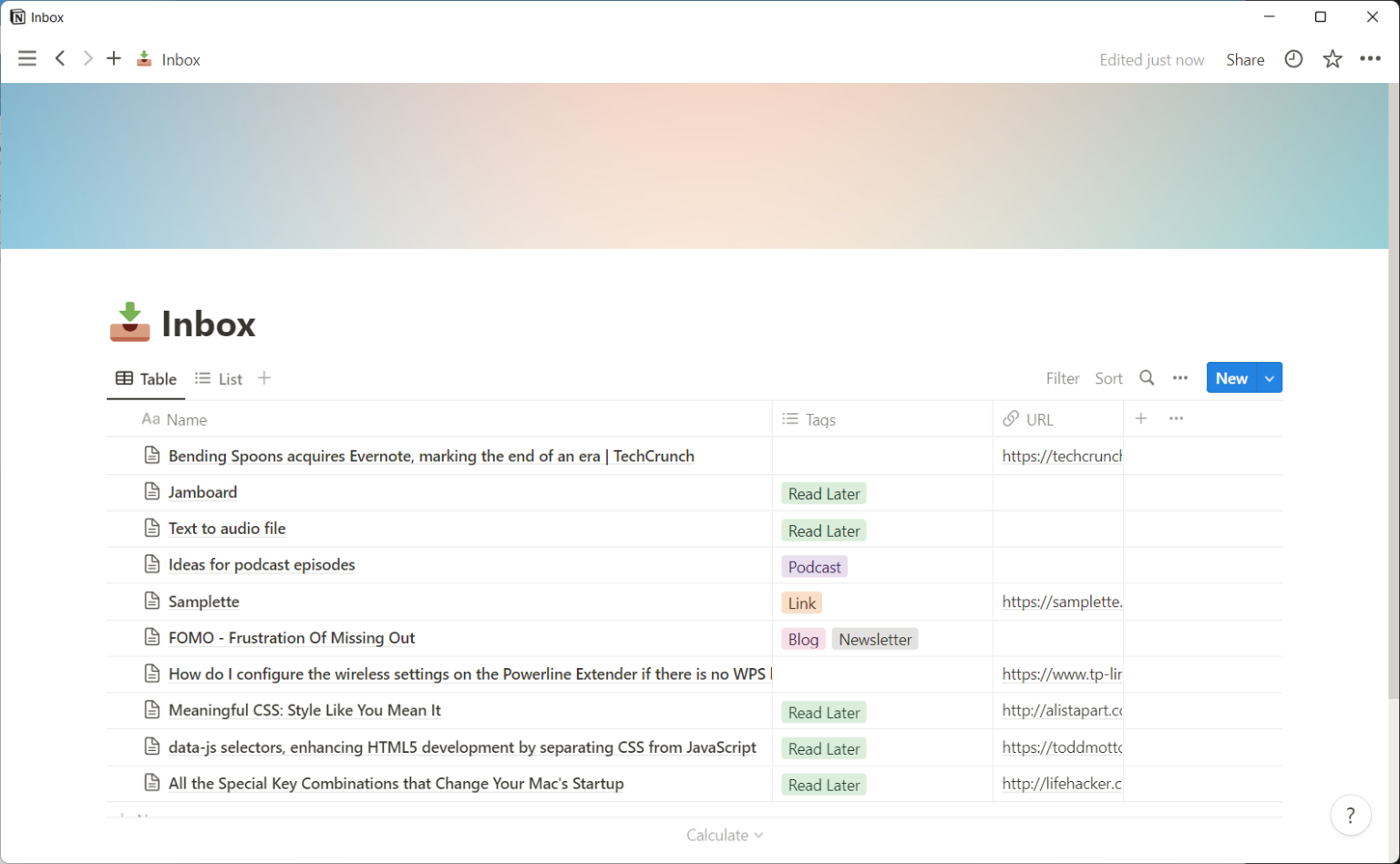

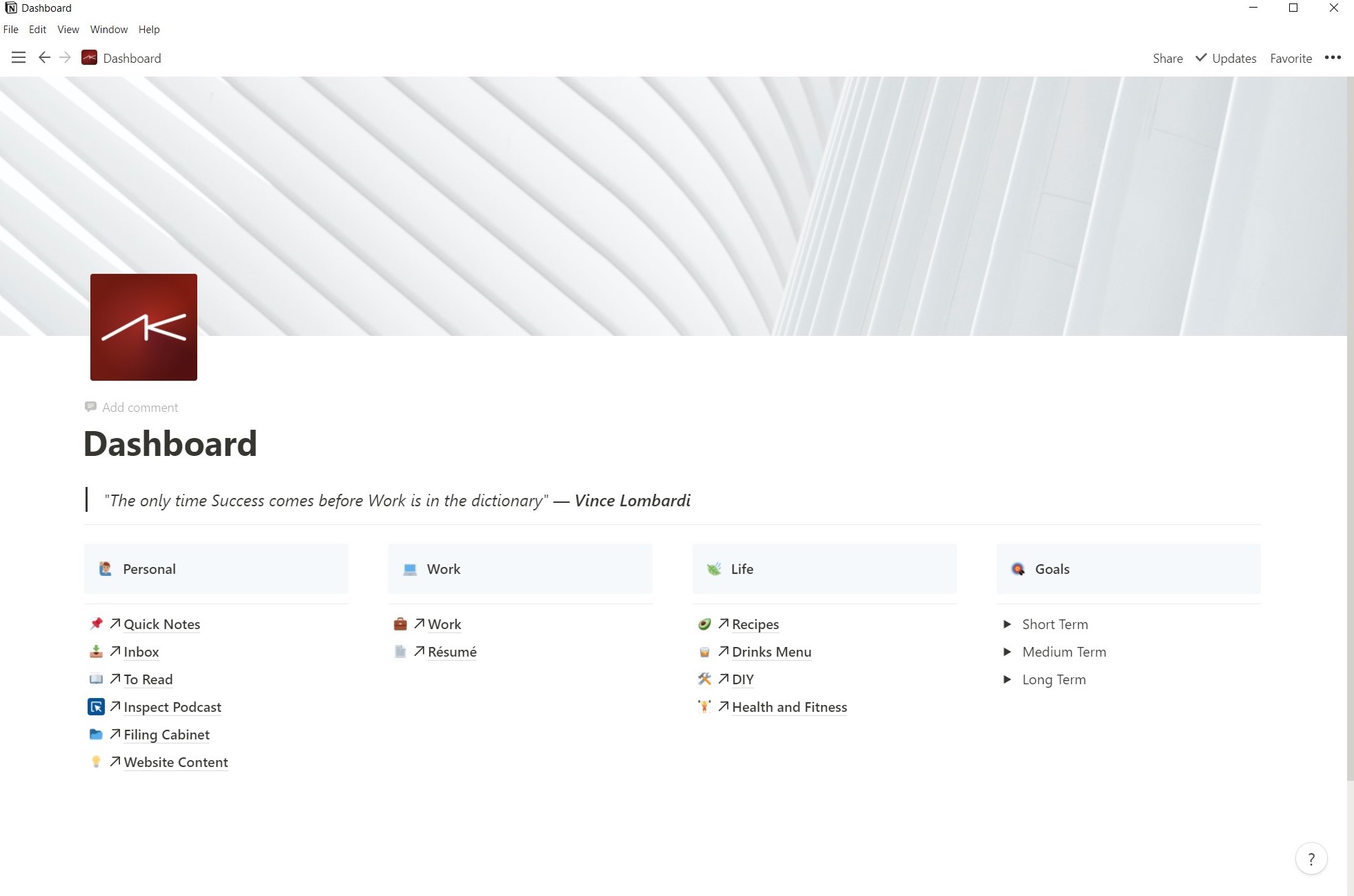

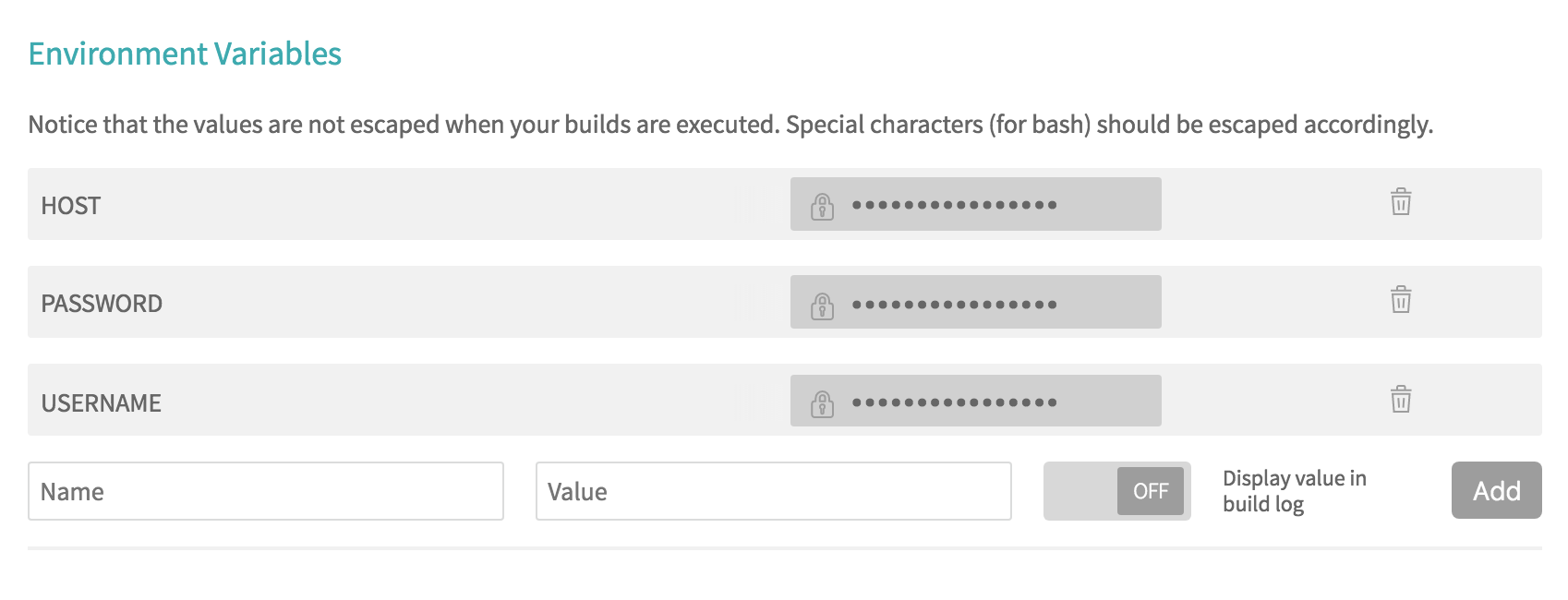

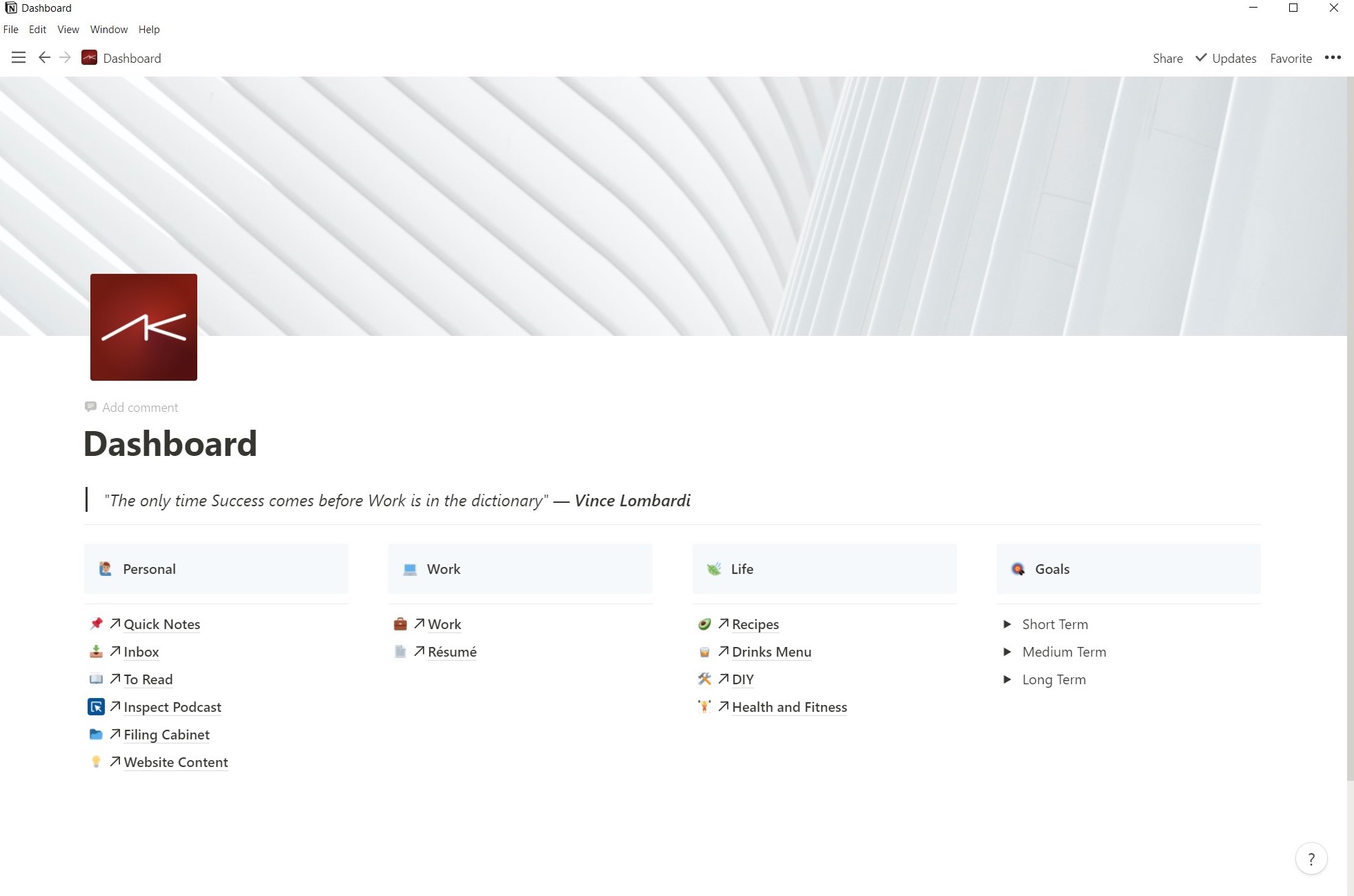

My Notion Dashboard

My Notion Dashboard

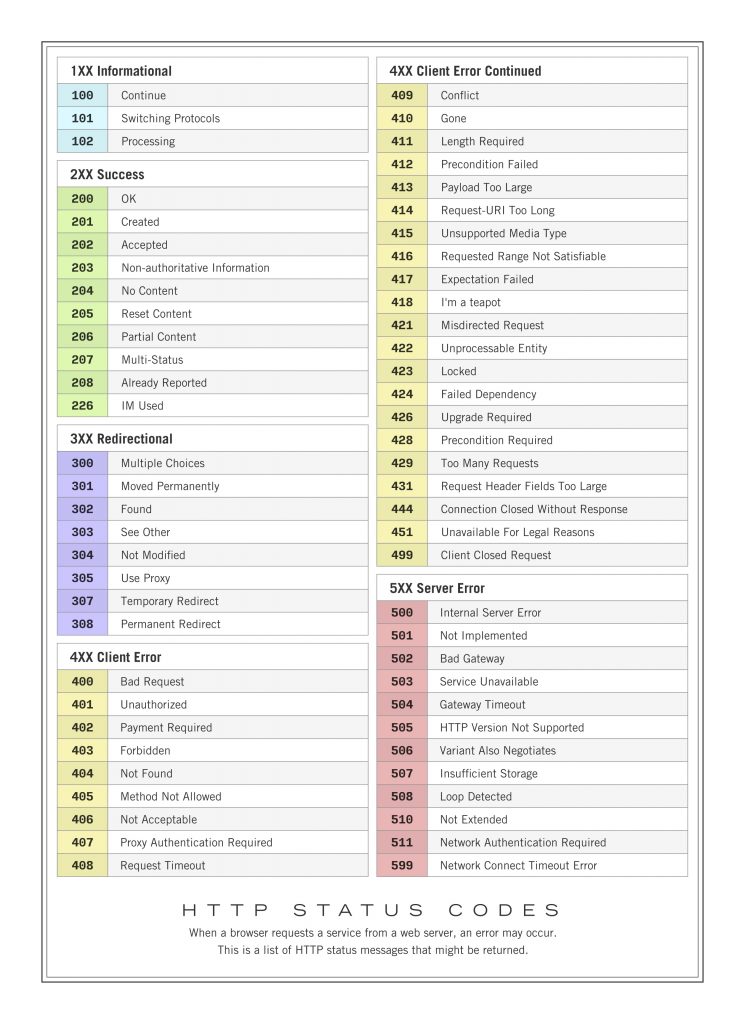

Data organisation

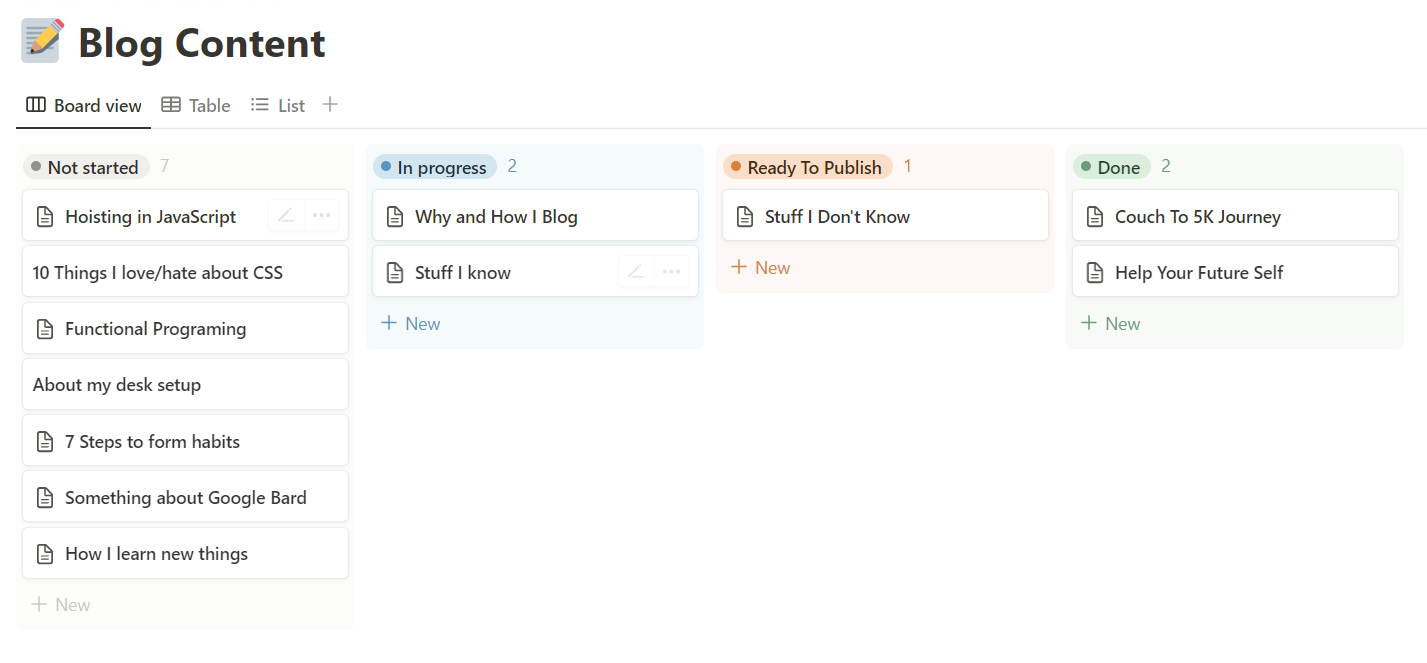

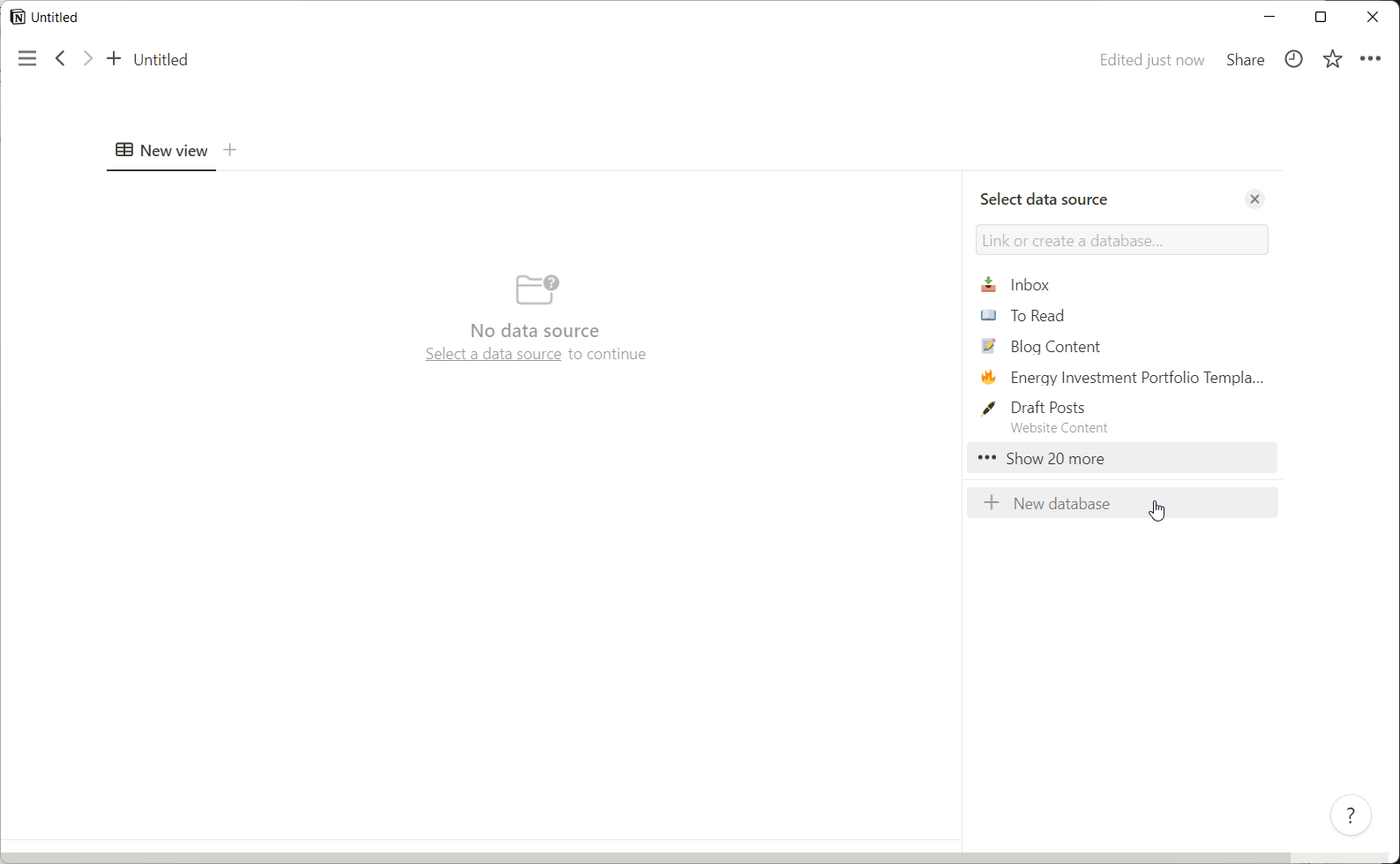

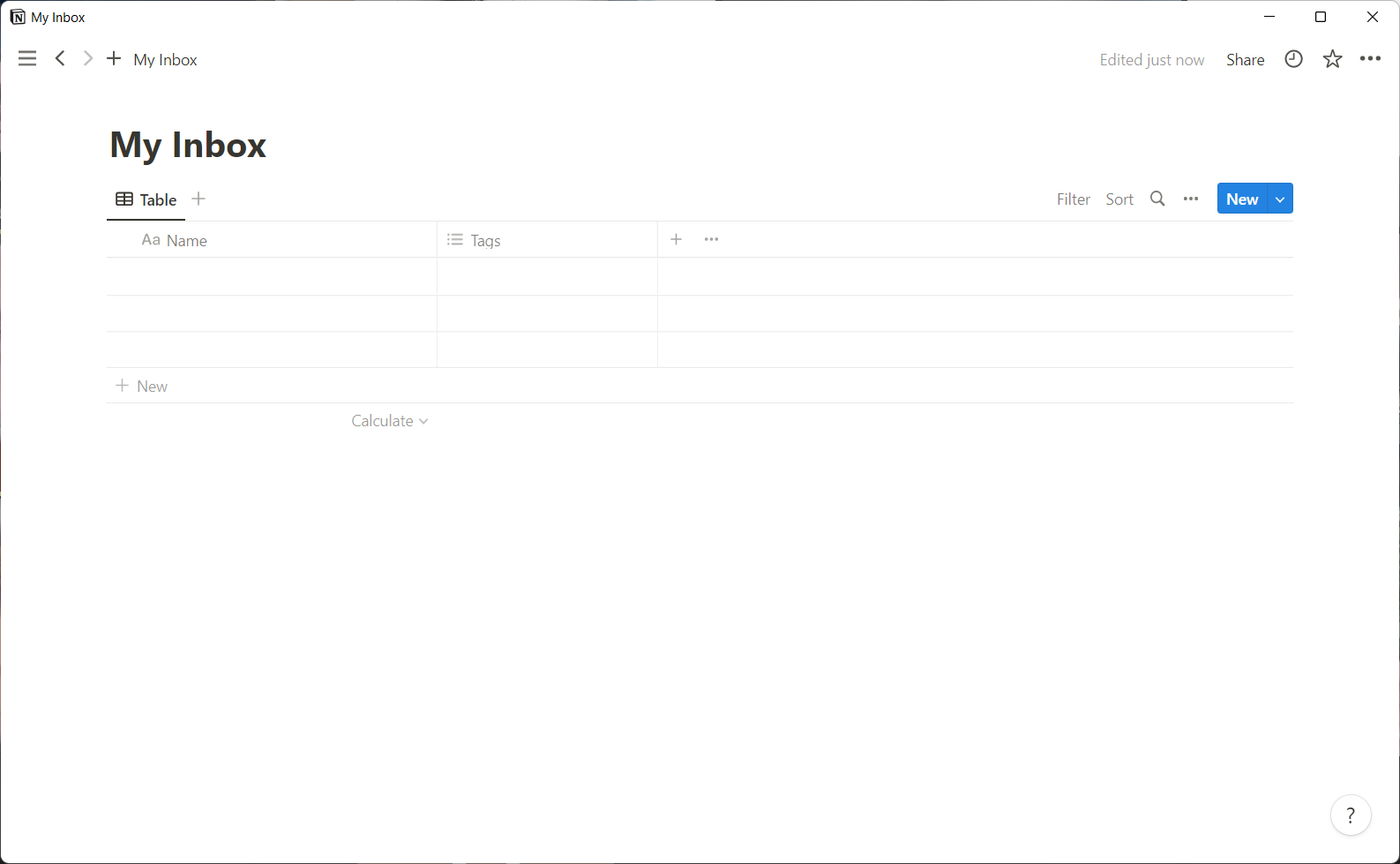

Notion is almost infinitely flexible. All of your data is organised into pages and databases and you can view the same piece of data in multiple ways to suit your style. A list of items can be shown with checkboxes as a simple todo list, in a Kanban style board to track progress or even on a calendar if there’s a relevant date field on each item.

Pages can be nested inside of and be linked to from other pages, creating a giant web of inter-connections, making Notion feel more like a well structured personal website then a note-taking app.

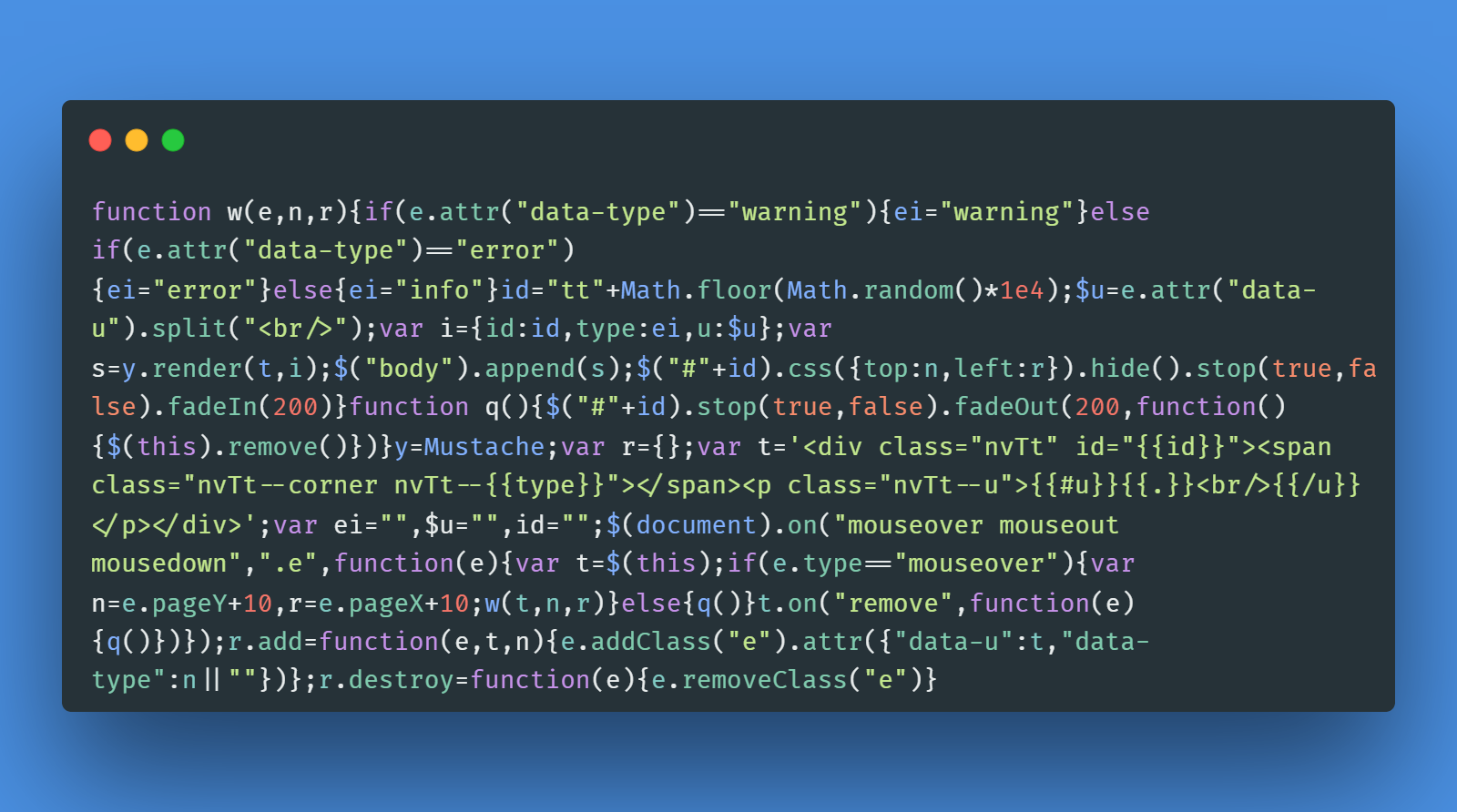

Hit ctrl/cmd+P to bring up the Quick Find dialogue box and you can instantly jump to any other page in your Workspace (more on workspaces later). It’s worth noting that this is the same shortcut as VS Code’s quick launch menu so you know that developers are one of the target demographics.

Moving data around is simple too. Every paragraph, heading, image, table, and any other bit of data you might have is stored as a ‘block’.

Getting Started with Templates

Out of the box, Notion ships with over 50 page templates to get your started. They range from templates for meeting notes, product roadmaps, to-do lists, reading lists, class notes, wikis, mood boards, goal setting and so much more. And if you still cant find what you’re looking for there are even more created by the Notion Community. (The Notion Community is pretty active with lots of resources on the Notion website and Reddit).

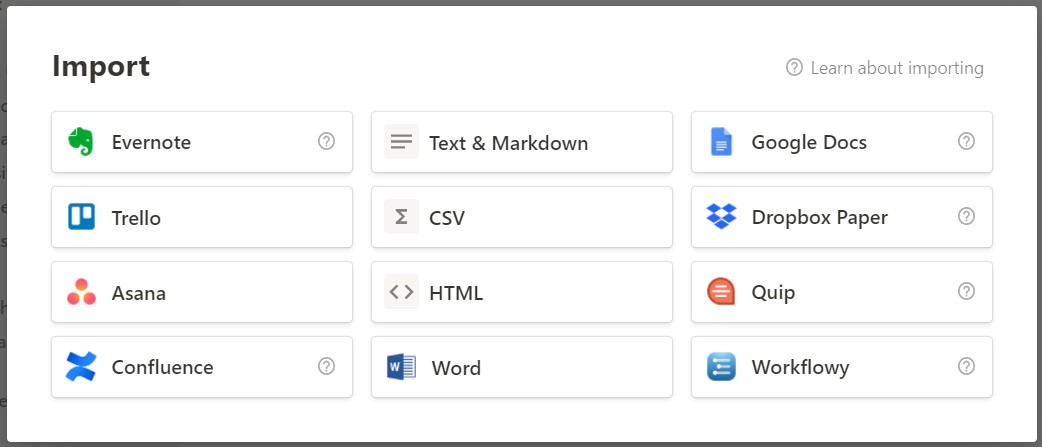

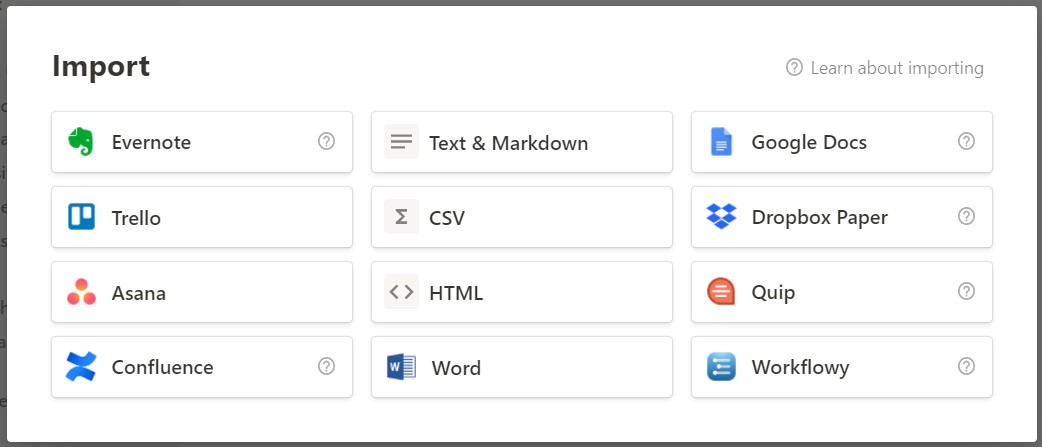

Importing you data

Bringing your data to Notion is pretty simple. There is an import function for a lot of common data sources and this is exactly how I ported all my data over from Evernote when I made the switch.

It’s almost perfect. If there are any formatting or structure issues its quite simple to correct these once in Notion.

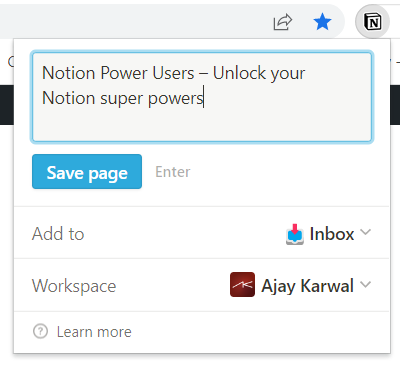

You can also get data in via browser extensions. I use this all the time, especially when saving recipes. Notion does a great job of pulling in only the relevant information (ignoring things like comments, adverts and navigation menus — something which the Evernote web clipper was pretty bad at doing)

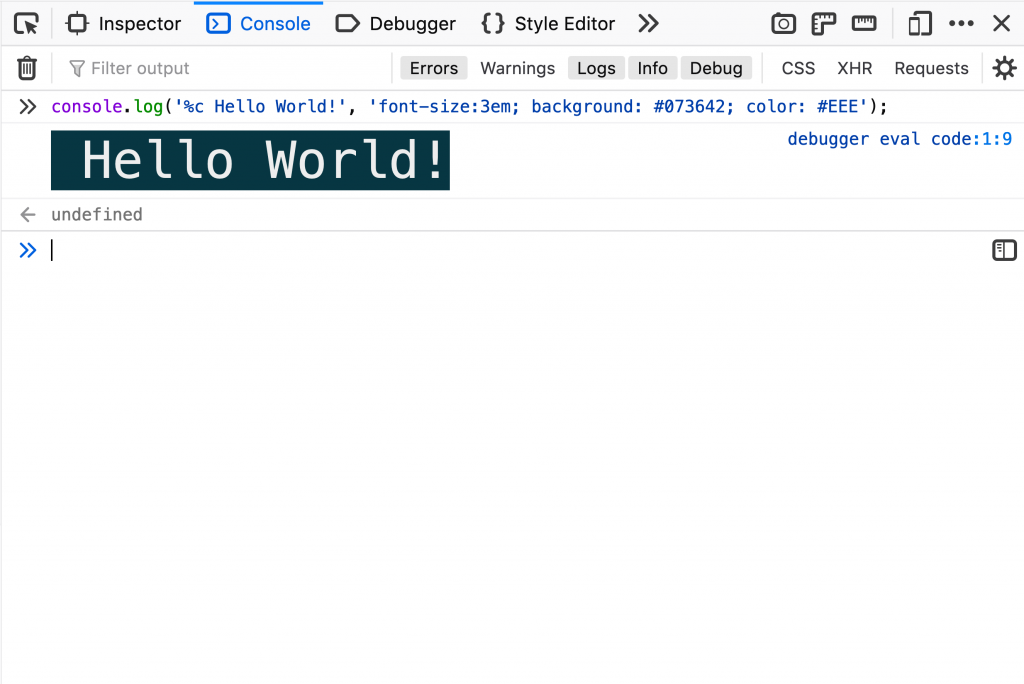

Markdown

I love markdown and this is the number 1 reason why I wanted to get away from Evernote as quick as possible.

Markdown gets out of the way and lets you just focus on writing, while using simple modifiers to apply styling where needed.

No need for additional toolbars to apply styling (although Notion does show one when text is selected, if you need it) or having to worry about formatting when sharing your notes elsewhere. It just works.

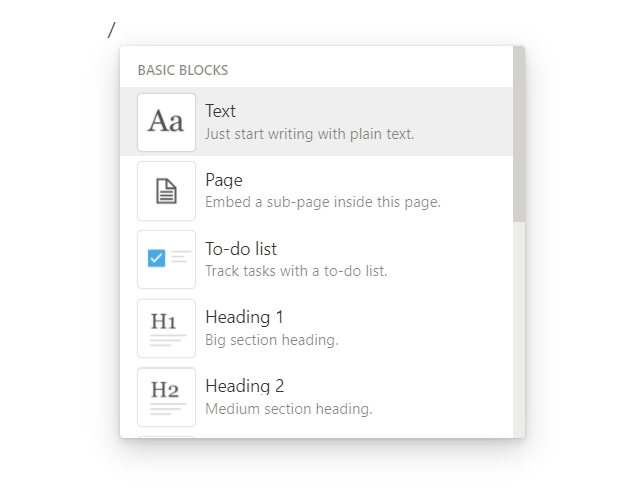

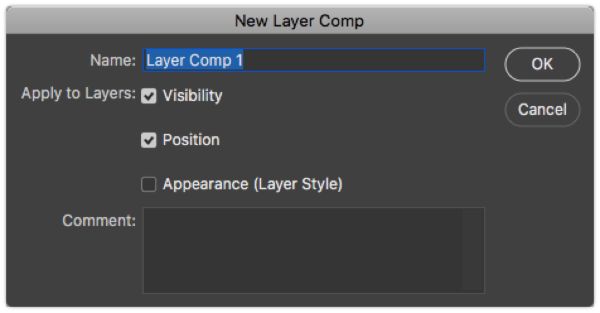

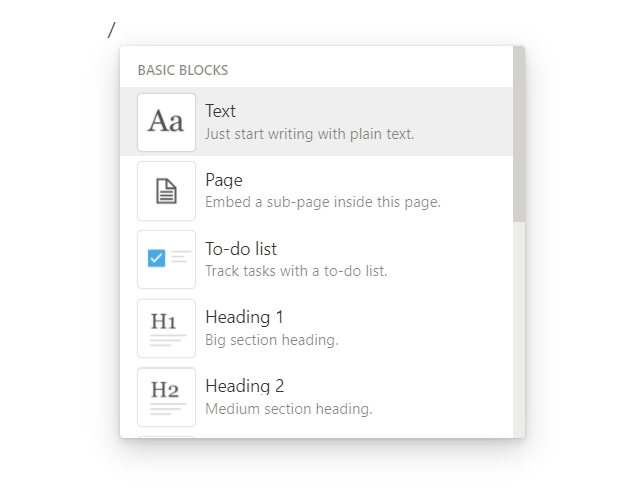

Quick Menus

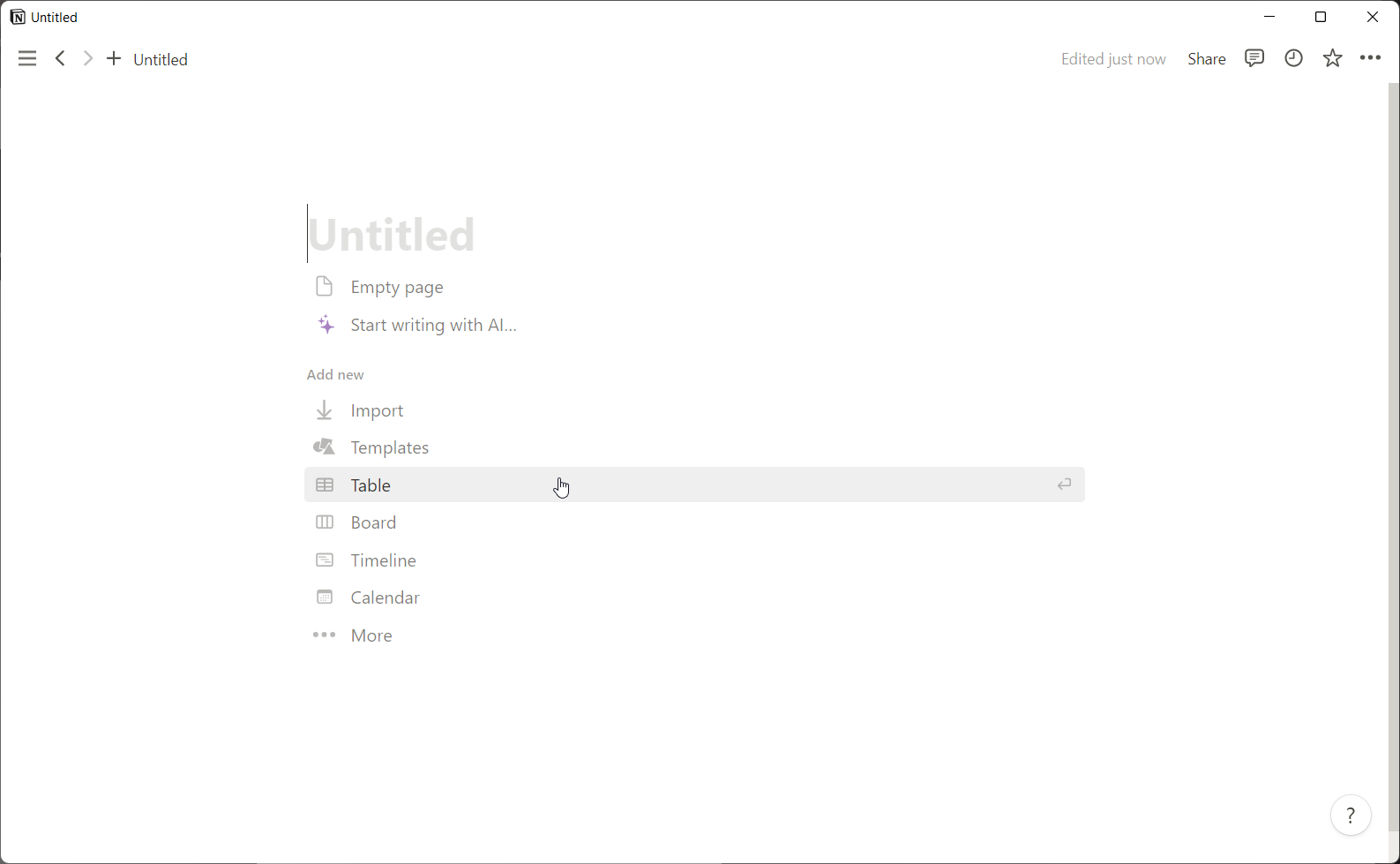

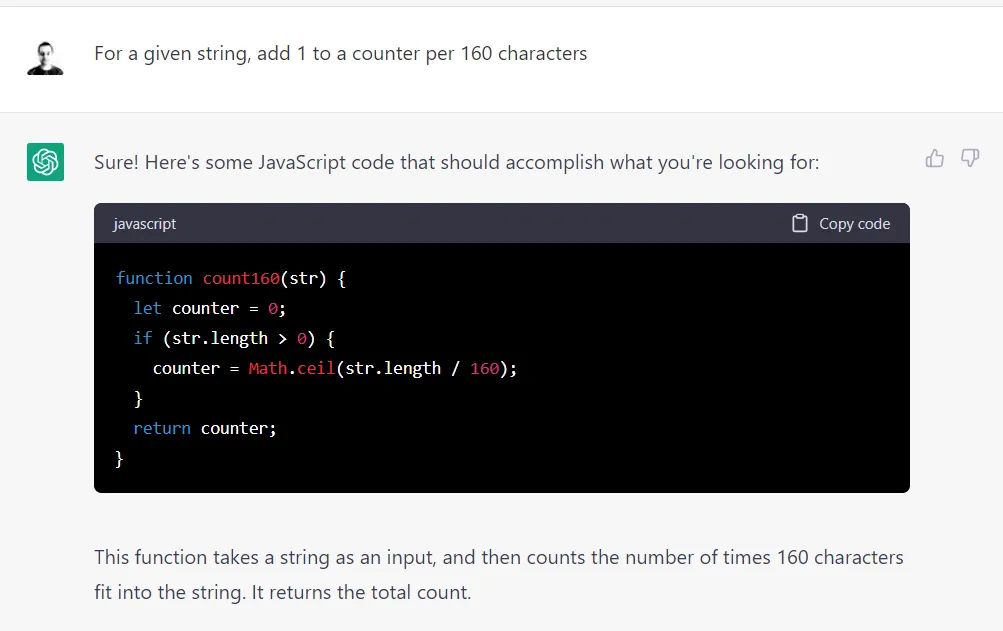

Notion has a neat little Block menu which is available via the / key at any point.

A block in Notion is any piece of content such as a heading, page, to-do list item, quote, divider, media, embed and many more.

The menu also filters as you type so typing /div [Enter] would quickly insert a Divider block.

There’s also a handy Actions menu via ctrl+/ which give you actions specific to the current block you’re focused on. You can delete, share, add styling and even convert the block into a different type — which is one of my favourite features!

There are a bunch of other keyboard shortcuts for navigating your way around Notion so it’s great for power users but also simple enough for complete beginners.

Embeds

Paste in a URL for an image, YouTube video, Tweet, PDF, Soundcloud or pretty much any other type of media and Notion will do all the heavy lifting to embed an interactive widget for the content, or at the very least, a nice preview with a link back to the original content.

This is great if you’re using Notion as a repository for collating information from various sources. Leave the original data where it currently is and use the Embed feature to surface it in your Notion pages.

Wrapping up

This only scratches the surface of what is possible with Notion and I’m constantly learning new things and tweaking the way my data is organised. I use Notion as a ‘read later’ service via the browser extension, a filing cabinet for important documents, a digital recipe book, and even a place to plan blog posts and podcast episodes. The way I’ve set up Notion is very personal to me and it will completely differ to the way you organise yours, which is one of the things I love about the Notion community. Its fascinating to see how people use Notion and the pride that people take in setting up their dashboard screens.

Notion is free to use (with paid options), with unlimited content blocks and no device limit (Evernote… take note of this!). It’s available on iOS, Android, Windows, Mac and on the web with consistent experience across all.

]]>